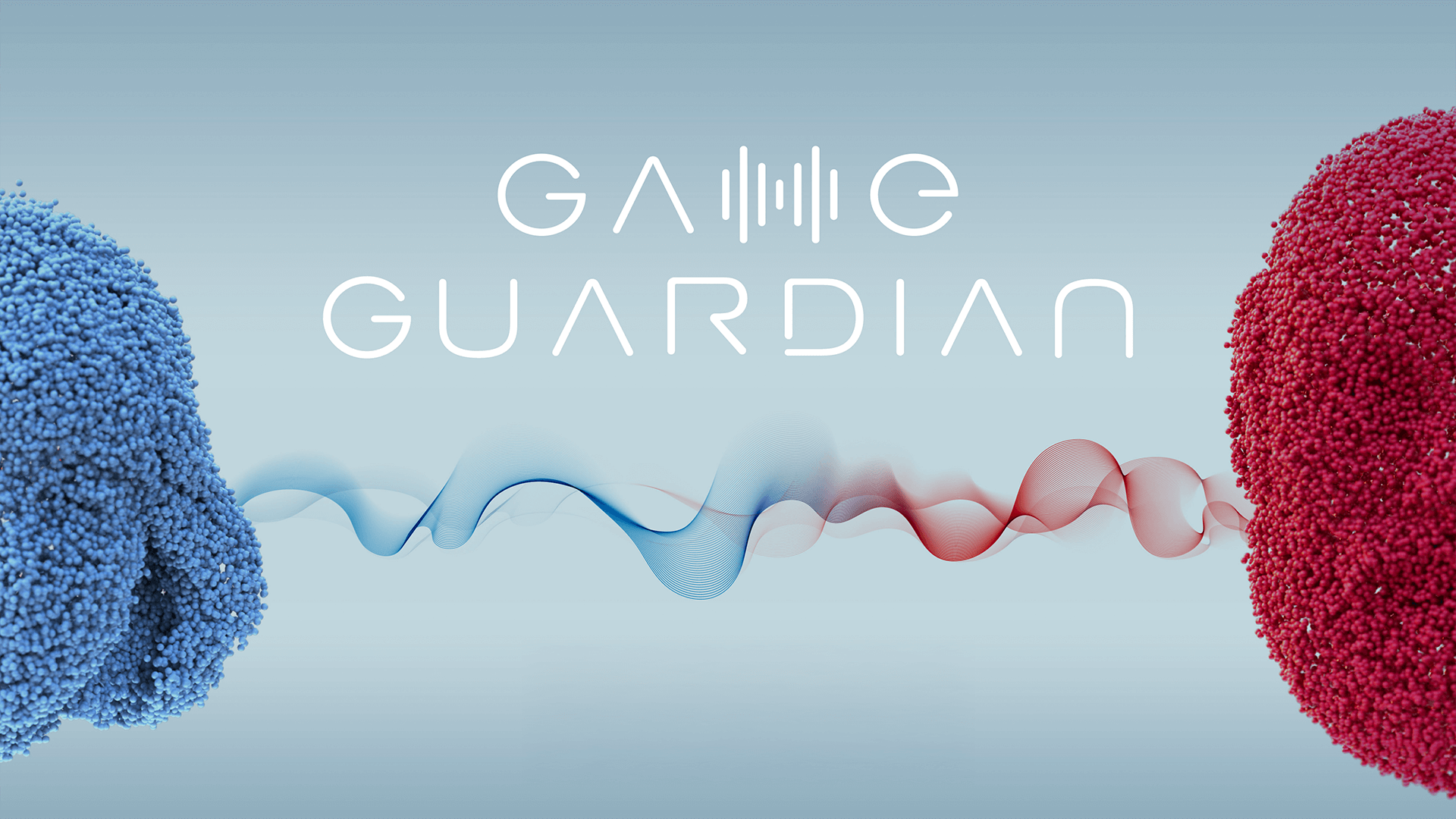

Game Guardian

VML Argentina

Medals Won

- 🥇 GOLD – Best Immersive Experience (Inc Gaming)

- 🥇 GOLD – Best Use of Generative AI

- 🥈 SILVER – Best Use of Predictive AI

Campaign Overview

Artificial Intelligence began enabling a dangerous new form of grooming: adults using AI-generated children’s voices to approach minors in gaming environments. Movistar responded with Game Guardian, a real-time protection system capable of detecting manipulated voices and grooming-related behavioral patterns. The technology was deployed as a simple Discord bot—easy to install, easy to use, and accessible to parents, moderators, and gaming communities. A dedicated website educated users while providing direct bot installation access.

To launch awareness, Movistar created an emotional experience for parents: they heard what sounded like a real child’s voice, only to learn it was AI-generated and actively used by abusers. This reframed the threat from abstract to immediate. In its first year, Game Guardian protected over 4,500 players, triggered 72 real-time alerts, and enabled moderators to intervene in 14 active cases—establishing a new benchmark for audio-focused digital safety.

Creative Concept

The campaign translated complex AI security into a simple, human experience—a Discord bot that anyone could deploy to protect their community.

-

AI Made Tangible: Instead of abstract warnings about online grooming, the campaign embodied protection in a bot with a human-like, urgent, and reassuring voice—turning an invisible threat into something users could see, understand, and control.

-

Revealing the Hidden Danger: The creative act centered on exposing how easily adults can mimic children. By demonstrating manipulated voices in real scenarios, Game Guardian showed the threat as it truly exists—not dramatized, but raw and real.

-

Emotionally Driven Adoption: Parents were guided through an immersive experience where seemingly innocent children’s voices were revealed as AI-manipulated simulations used by real groomers. This shock formed an emotional turning point that built urgency and trust.

-

Behavior-Backed Protection: The bot cross-referenced audio cues with behavioral signals to issue instant alerts, making AI safety feel immediate, intuitive, and actionable for regular Discord families.

The campaign reframed AI security as a community movement—empowering parents, guardians, and moderators with a tool that felt protective, personal, and essential inside the spaces where children spend time every day.

Execution Strategy

Training AI to Detect the New Reality: The strategy began by addressing a modern blind spot—groomers using AI to impersonate children. Models were trained on commonly used voice filters and on behavioral grooming markers such as secrecy, isolation attempts, manipulation, and exaggerated flattery. By combining vocal manipulation signals with linguistic and psychological patterns, Game Guardian could identify emerging threats and trigger real-time alerts.

Parent-First Awareness Architecture: Because technology alone was not enough, the launch experience was designed to confront parents directly with the threat. They heard what they believed was a real child—only to have it revealed as a synthetic, manipulated voice used by actual predators. This emotional intervention created instant urgency, driving installation, sharing, and community advocacy within minutes.

Discord as the Protection Environment: Discord was selected for its dominance in gaming voice chat and its lack of native voice-protection tools. The bot was engineered for frictionless adoption: a simple install, automatic activation, and instant alerts sent to children, guardians, and moderators. Clear, human-toned notifications ensured transparency and trust.

Community Mobilization Through Trusted Networks: Parents, moderators, and gamers were positioned as core distribution forces—turning early adopters into protectors. Shareable alerts, visible safety interventions, and community endorsements fueled organic spread, embedding Game Guardian inside the spaces where children communicate most.

Impact and Results

The campaign delivered remarkable results:

-

Active for 428+ hours on real Discord servers, validating continuous real-world operational performance.

-

4,500+ unique players protected, demonstrating meaningful scale in safeguarding vulnerable users.

-

72 automated threat alerts issued, confirming accurate detection of AI-manipulated voices and grooming behaviors.

-

25 parental contact notifications triggered, reinforcing trust and creating direct safety interventions.

-

14 active cases under moderator review, showing the system’s effectiveness in escalating credible risks.

-

453 emergency-contact registrations, indicating deep adoption and long-term reliance on the tool.

-

Widespread organic sharing by parents, streamers, media, and gaming communities, amplifying reach without paid promotion.

Why It Worked

Game Guardian succeeded because it delivered real-world protection, not just awareness. Its adoption, active hours, player coverage, and alert volume show a system used consistently and meaningfully. The bot activated a broader safety network by notifying parents directly and enabling moderators to intervene early—something no gaming platform had previously offered for voice-based threats.

The campaign also succeeded emotionally: parents were moved to action after experiencing the immersive demonstration. Streamers and moderators organically recommended it, proving cultural resonance beyond paid media. Most importantly, Game Guardian set a new precedent for digital safety—creating the first voice-focused barrier against AI-assisted grooming and defining what responsible protection must look like in a rapidly evolving online ecosystem.

Client

Movistar

Agency Name

VML Argentina

Categories

Best Immersive Experience (Inc Gaming)

Best Use of Generative AI

Best Use of Predictive AI

Related Case Studies